“Totalitarianism, at its essence, is an attempt at transforming reality into fiction.”

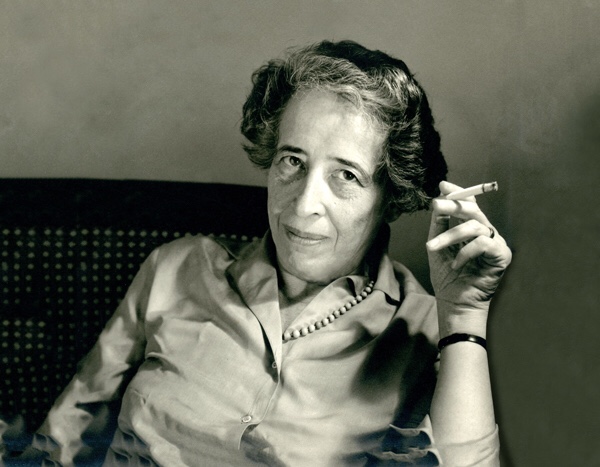

HANNAH ARENDT

“Totalitarianism, at its essence, is an attempt at transforming reality into fiction.”

HANNAH ARENDT

The device can read ‘neural signals coming from my brain, down my spinal cord along my arm, to my wrist.’

By Raymond Wolfe

January 29, 2021 (LifeSiteNews) — New information about Facebook’s effort to develop brain-reading technology came to light last month after a recording from a company meeting was leaked to the press.

Speaking with Facebook founder and CEO Mark Zuckerberg and other top executives of the social media giant, Chief Technology Officer Mike Schroepfer previewed a sensor device that he said can read “neural signals coming from my brain, down my spinal cord along my arm, to my wrist.”

He added that “this sensor that we are building detects [neural signals], interprets them, and allows me to control [the] device.” This includes, for instance, typing or playing video games with mental commands.

Schroepfer’s revelations are the latest from the Big Tech company’s secretive, years-long quest to put out a neural device. The project began with plans for a “brain mouse” that would allow users to type with their minds, as the director of Facebook’s now-defunct research lab, Building 8, announced in 2017.

Since then, Facebook has purchased neural interface startup CTRL-Labs, the developer of an experimental wristband that purports to give users the ability to operate computers by thinking.

In a post announcing the acquisition of CTRL-Labs in 2019, the head of Facebook Reality Labs, Andrew Bosworth, claimed that the wristband “will decode” neural signals and “translate them into a digital signal your device can understand.” “It captures your intention so you can share a photo with a friend using an imperceptible movement,” or by “intending to,” he added.

Earlier that year, Facebook had revealed details of a separate thought-reading headset in a paper published in Nature Communications. Researchers backed by the company claimed that the algorithm for the headset technology could interpret speech from brain signals with 61-76% accuracy.

The research team published another paper in 2020 detailing an artificial intelligence system that can translate thoughts to text in real time by analyzing brain data. The AI had an error rate that was as low as 3%, according to the study. In the leaked audio from December, Mike Schroepfer noted that Facebook uses artificial intelligence prolifically to censor certain users, celebrating that AI bots remove 95% of “hate speech.”

“Our investments in technology aren’t just about keeping our services running,” Schroepfer said. “We are paving the way for breakthrough new experiences that, without hyperbole, will improve the lives of billions.”

At the same time, Schroepfer noted Facebook’s damaged public image, which has suffered due to a wave of damning privacy scandals. Just last year, Facebook paid out $550 million to settle a class-action lawsuit that argued the company illegally collected biometric data through its facial recognition practices.

Besides Facebook, several other notable tech companies have ventured into neural technology. Last March, Microsoft patented a cryptocurrency system that incorporates wearable sensors to track users’ brain waves. Neuralink, a startup founded by Tesla CEO Elon Musk, wants to go even further, with implantable computer chips to treat neural disorders.